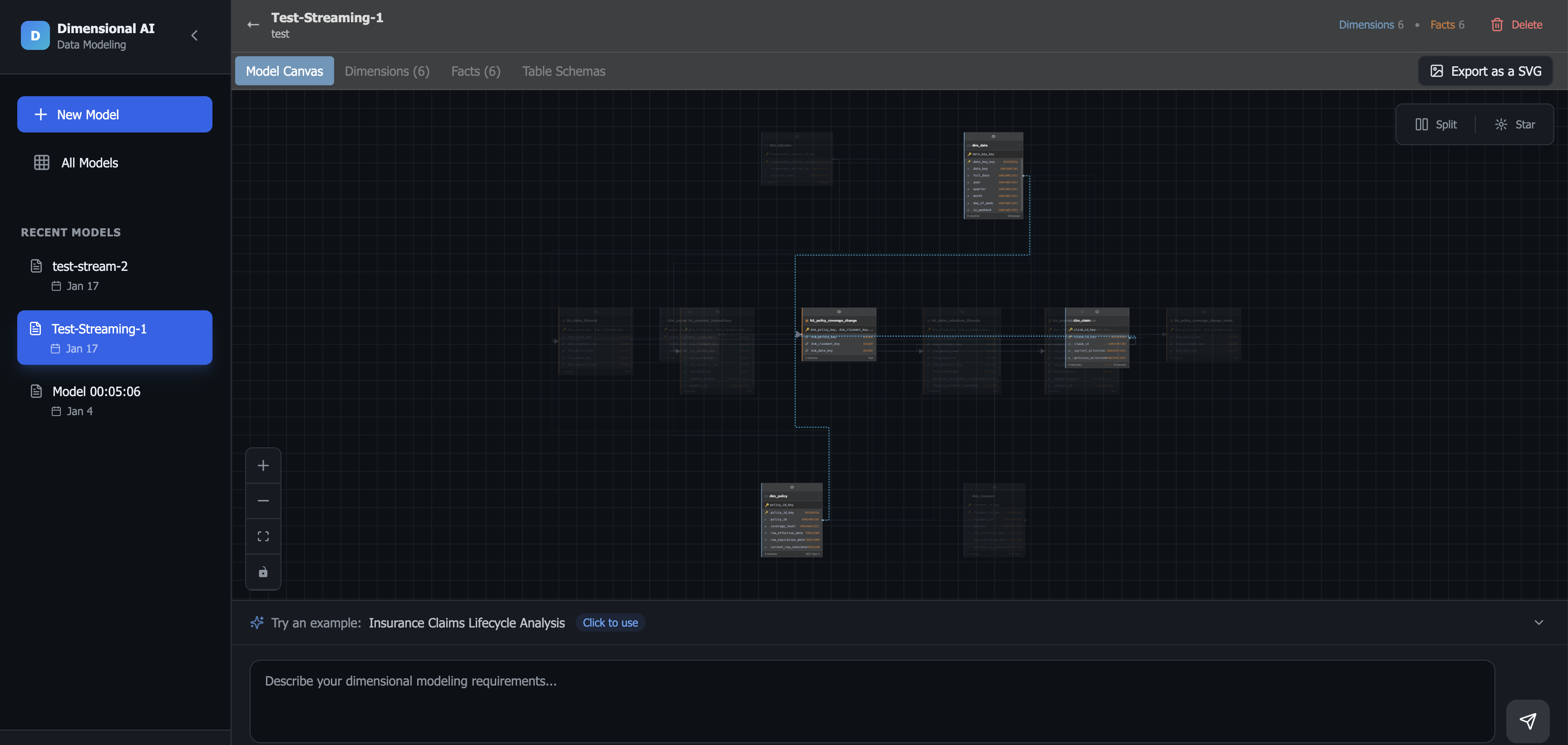

Dimensional Modeler

AI-powered schema design. Describe your analytics requirements in plain English and get production-ready star schemas with facts, dimensions, and DDL.

$ npm start

/* production-grade infrastructure for ambitious teams */

Building tools and services for Big Data, ETL, and MLOps. We help teams move fast without breaking things.

Distributed architectures that scale from gigabytes to petabytes. We modernize data lakes and warehouses for speed and cost-efficiency.

Robust, self-healing pipelines with full observability. We handle the messy reality of data integration so you don't have to.

From research to production. We build feature stores, model deployment pipelines, and monitoring systems for AI at scale.

AI-powered schema design. Describe your analytics requirements in plain English and get production-ready star schemas with facts, dimensions, and DDL.

$ npm start

Visual pipeline design for data engineers. Document, plan, and communicate ETL processes with your team.

Turn screenshots and photos of diagrams into fully editable Draw.io and open formats using Computer Vision.